SPONSORED CONTENT

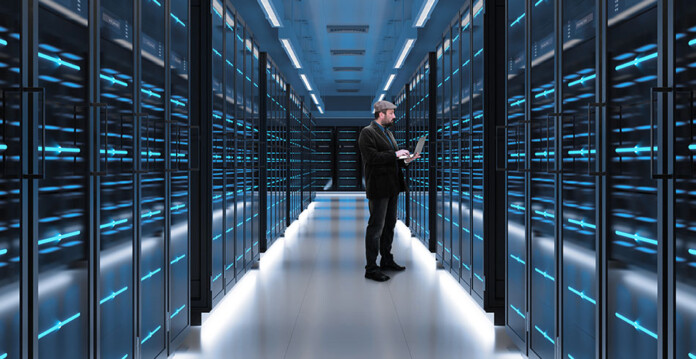

After decades of relatively stable demand, electricity consumption is projected to surge in many markets, with significant regional differences. This shift is driven by rising cooling and heating needs, as well as the increasing electrification of industry and mobility. Another major demand driver has been the rapid growth of data centres supporting enhanced digitalisation—an expansion expected to accelerate significantly with the additional load anticipated from the adoption of artificial intelligence (AI).

AI is emerging as one of the most transformative technologies of the 21st century, offering a generational opportunity to enhance economic competitiveness, boost productivity, and unlock new scientific and technological frontiers. However, at the same time, AI is also highly energy-intensive, and the infrastructure required to support its growth has become a strategic priority.

According to the International Energy Agency (IEA)1, approximately 945TWh of electricity demand will come from data centres globally by 2030, with AI being the primary driver of this growth. AI related electricity demand will account for around 10% of global electricity demand growth to 2030. In advanced economies, where demand has been largely stagnant for decades, this figure could exceed 20%.

Meeting the new challenges

Challenge No 1: Grid Capacity

As more data centres are developed, data centre power demand is projected to increase significantly in the short term—up to 945TWh by 2030. This is putting grid infrastructure around the world under pressure. Data centres can access power through several key pathways, each shaped by their energy needs and strategic priorities. While some data centres might co-locate with renewables and storage, today most rely either fully or partially on the local electricity grid. However, electric grids were not necessarily designed to accommodate such additional fast growth, particularly the type of growth associated with AI data centres.

As of 2025, thousands of data centres globally are facing delays in grid connection due to grid infrastructure constraints. The IEA predicts that up to 20% of data center capacity could be at risk of connection delays between 2025 and 2030, including both new builds and expansions, due to insufficient grid capacity or permitting delays.

Challenge No 2: Equipment Availability

The expected increase in power demand from AI data centres will also require more critical grid equipment, such as transformers, switchgear and power quality devices. Due to factors such as limited manufacturing capacity and trade constraints coupled with surging global demand and raw material shortages, there are currently delivery wait time for power transformers. Added to this, demand for power transformers is expected to increase significantly in the short-term driving the need for manufacturing capacity investments globally. In fact, according to the IEA in 2023, USD 1.2 trillion of cumulative investment would be required to bring enough manufacturing capacity online by 2030 for the world to stay on track for climate and energy goals.

While transformers will be essential to ensure speedy deployment of AI data centers globally, the story does not end there. There are many other technologies, solutions and services needed across the plan, build, and operate phases of the data centre value chain.

AI data centres will drive demand for different types of switchgear, particularly high voltage Gas-Insulated Switchgear (GIS), Air-Insulated Switchgear (AIS) and Hybrid Switchgear, as well as electrical disconnectors and Generator Circuit-breakers (GCBs).

Battery Energy Storage Systems (BESS) will be required for data centres co-located with generation, particularly renewables, to provide back-up power and resilience, as well as for smoothing some of the fluctuations in AI workloads. As another example, both grid operators and AI data centers will require power quality solutions such as E-STATCOMS, voltage regulators and active harmonic filters providing both grid stability services as well as protecting sensitive equipment and supporting high-performance workloads like AI training.

Challenge No 3: Increased complexity such as demand and supply variability

Unlike traditional computing loads that maintain relatively steady power consumption patterns, AI processing causes rapid, unpredictable fluctuations in power demand with the grid scrambling to keep up and maintain reliable power. Examples include: AI Training & Inference Cycles: In an AI data centre, different tasks have different energy consumption intensity. For example, AI training, where a model learns to perform a task, is extremely energy intensive. The different tasks can result in fluctuating energy consumption needs. To take an example, an AI data centre is maintaining a 100MW load. Software within the AI data center triggers AI training to begin. The load ramps from 100MW to 250MW in a few seconds. The load profile then continues to fluctuate e.g. bursts and dips due to internal phases associated with AI training.

Disconnections and Reconnections: The disconnection of a large AI data center due to a fault, maintenance, or grid instability, causes a sudden drop in load which can be challenging for the grid operator to manage. The reconnection phase also presents numerous challenges. The ‘switch on’ of multiple data centres can create demand surges that overwhelm grid capacity, potentially triggering protective disconnections elsewhere in the system

Global leader in electrification leverages expertise to accelerate the AI boom

Hitachi Ltd and Hitachi Energy have recently announced support for the 80VDC power architecture announced by NVIDIA, by developing a cleaner, more efficient way to power the next-generation artificial intelligence (AI) infrastructure. This power architecture paves the way for larger, more energy-efficient “AI factories” at a global scale.

Modern AI workloads are pushing data centres beyond the limits of traditional power architectures, which were designed for much smaller compute loads. Hitachi Energy’s advanced grid-to-rack architecture supports the 800VDC rack design and streamlines how electricity flows from the grid into servers. The result is a simpler, more efficient, and more sustainable power system built for modern data centres that cuts energy waste, reduces cooling needs, and accelerates the deployment of hyperscale AI facilities.

From energy consumer to energy partner

For decades, data centres were passive and steady consumers of electricity, but that model no longer works. The AI factory of the future must act as an energy hub—flexible, digitally connected, and capable of supporting the grid while also drawing from it.

Hitachi Energy is helping make that transition real. As the global market leader in transformers, high-voltage technology, digitalised grids, and service, Hitachi Energy is investing $9 billion USD globally, the largest in the industry, to expand manufacturing, R&D, engineering, and partnerships. The investments will be critical to meeting energy needs, including AI data centres and supporting a robust, future-ready electric grid.

For more information visit Hitachi Energy.